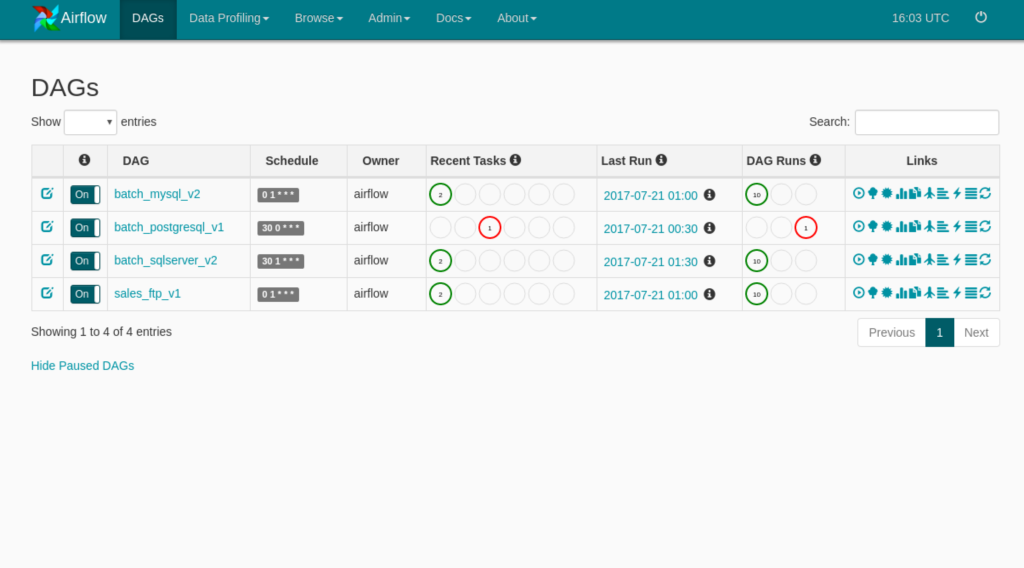

The DAGs list may not update, and new tasks will not be scheduled. 4) delete ALL files in AIRFLOW_HOME directory and run airlow db init. Of course you get something on (Linux) shell … at 15:50.

I have successfully gotten apache airflow installed locally via pip. might be related to the warning "No user yet created", run the following in your terminal : FLASK_APP=airflow. Use the same configuration across all the Airflow components. However, I wanted to daemon-ize the set up and used the instructions here. err … Whenever I start the upstart job, the airflow-webserver job goes straight to stop/waiting. 1s ⠿ Container airflow … This tutorial provides a step-by-step guide through all the crucial concepts of deploying Airflow 2. Using the above approach you can avoid the port conflict and fix the docker driver failed programming external connectivity on endpoint web server issue. This means you’d typically use execution_date together with next_execution_date to indicate the full interval. You need change you … Step 6: Setup Airflow Webserver and Scheduler to start automatically. After reading through the source code of cli. Didn't found either, in the running-airflow-locally section they seem to assume you're using a Unix based machine. service sudo systemctl start airflow-webserver. service Description=Airflow webserver daemon After=network. Basically, you need to implement a custom log handler and configure Airflow logging to use that handler instead of the default (See UPDATING. js and Gitlab container registry on Ubuntu 20. The ports we will be targeting are 8080 for Apache Airflow and 8888 for Jupyter Notebook. The workaround is: From docker tray menu select Restart to restart docker. Creating robust International Trade data pipelines using Apache Airflow. The only way I got the script to work was by getting these environ variables defined before running an HTTP request: os. 8):-D, -daemon Daemonize instead of running in the foreground. This procedure assumes familiarity with Docker and Docker Compose. I have configured airflow with mysql metadb with local executer. In this article, DAG sharing between Web Server, Scheduler, and Kubernetes Executor was solved using S3, which is relatively easy to use, and the duplication of Web Server and Scheduler was also configured using Load Balance. For information about creating an Astro project, see If your apache-airflow installation requires redis or rabbitmq services than you can specify the dependency here EnvironmentFile : it specifies the file where service will find its environment variables User: set your actual username here, the specified userid will be used to invoke the service ExecStart : here you can specify the command to be … AIRFLOW_CORE_FERNET_KEY=some value AIRFLOW_WEBSERVER_SECRET_KEY=some value The setup itself seems to be working as confirmed by an exposed config in the webserver GUI: link to a screenshot. 2 version in airflow webserver, still iam seeing the 3DES cipher warning and broken RC4. service rabbitmq Setting up Airflow on AWS Linux was not direct, because of outdated default packages. When nonzero, airflow periodically refreshes webserver workers by # bringing up new ones and killing old ones. Everything was working working before yesterday (3/23) and this issue is fairly recent. Here is my config file definition: #airflow-webserver. As an additional troubleshooting step, i tried running "airflow webserver" as the user like this: sudo -s -u airflow webserver. 15 airflow webserver starting - gunicorn workers shutting down. So far I've installed docker and docker-compose then followed the puckel/docker-airflow readme, skipping the optional build, then tried to run the container by: docker run -d -p 8080:8080 puckel/docker-airflow webserver.

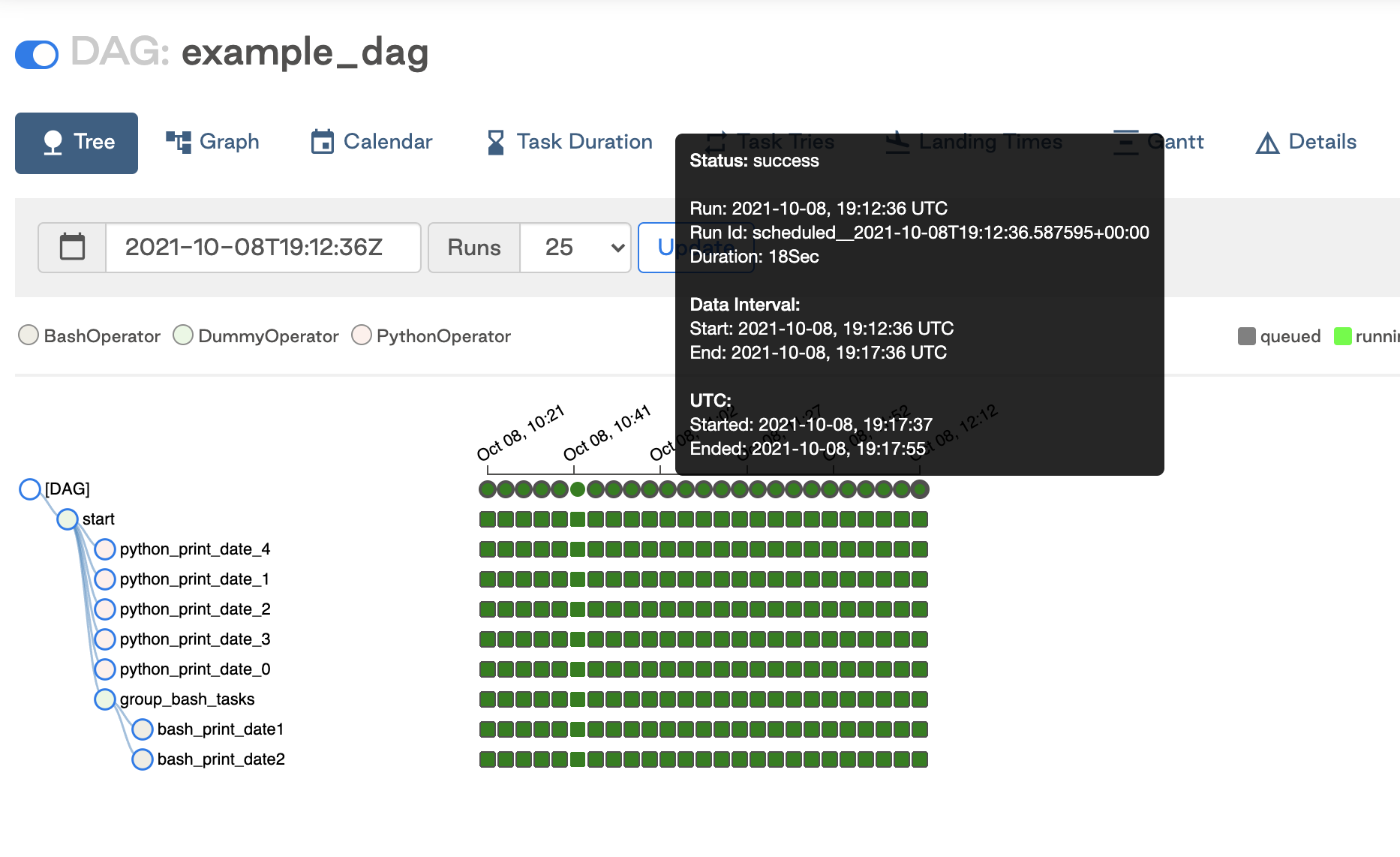

I then stop I am able to run airflow test tutorial print_date as per the tutorial docs successfully - the dag runs, and moreover the print_double succeeds. I have set up airflow on an Ubuntu server. 7) running on machine (CentOS 7) for long time. Using Airflow in a … Airflow has a very rich command line interface that allows for many types of operation on a DAG, starting services, and supporting development and testing. Last heartbeat was received 5 minutes ago. I don't understand why, since the webserver should look in … I would like to run the scheduler as a daemon process with. Run the following two commands to run the Airflow webserver and scheduler in the daemon mode: airflow webserver -D airflow scheduler -D Image 8 - Start Airflow and Scheduler (image by author) Let’s make sure our OS is up-to-date. cfg after you save the file, you shall run airflow db init and start airflow webserver again airflow webserver -D. Starting and Managing the Apache Airflow Unit Files.

ResolutionError: No such revision or branch '449b4072c2da' seems to refer to an existing alembic revision number.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed